Red Teaming for Generative AI: Why It Matters

Red team cyber security helps identify AI vulnerabilities, prevent jailbreaks, and ensure safe, compliant, and reliable performance in generative AI systems.

Accorp Compliance Team

Our team of compliance experts specializes in PCI DSS, SOC 2, and other security frameworks to help businesses achieve and maintain compliance.

Red teaming is a proactive method used to evaluate AI models by simulating real-world adversarial scenarios. It helps identify vulnerabilities such as the exposure of confidential information or the generation of harmful, biased, or misleading content—ultimately strengthening the model’s safety, accuracy, and reliability.

As generative AI continues to revolutionise industries, ensuring the safety, accuracy, and ethical behaviour of large language models (LLMs) is becoming a non-negotiable priority. Red team testing, once a strategy born from Cold War military exercises, is now one of the most effective tools used to uncover weaknesses in generative AI systems before they’re exploited in the real world.

At Accorp, we recognise the importance of proactive AI testing — and red team cyber security is at the heart of building trustworthy, secure, and compliant AI solutions.

What Is Red Teaming in Generative AI?

In the context of generative AI, red teaming is an interactive, adversarial testing process designed to challenge AI models in creative and unexpected ways. It involves simulating real-world attacks and stress scenarios to expose:

Toxic or harmful language outputs

Biases based on gender, race, or culture

Exposure of private or sensitive data

Generation of factually incorrect or misleading content

Bypass of safety filters (also known as "jailbreaking")

This process helps AI developers and security teams improve model alignment, harden defences, and ensure responsible AI deployment. This is the kind of red penetration testing that goes beyond traditional software security — it tests the ethical and functional boundaries of AI systems.

The Evolution of Red Teaming: From Military to AI

The origins of red teaming trace back to military strategy, where simulated enemy teams (the "red team") would stress-test defensive tactics. The cybersecurity world later adopted this model to uncover system vulnerabilities.

Today, AI red team operations apply this methodology to LLMs and image-generation models, like those behind ChatGPT, DALL·E, or open-source models. But unlike traditional software, generative AI poses unique challenges: it can scale toxic content, hallucinate facts, or even leak training data unintentionally.

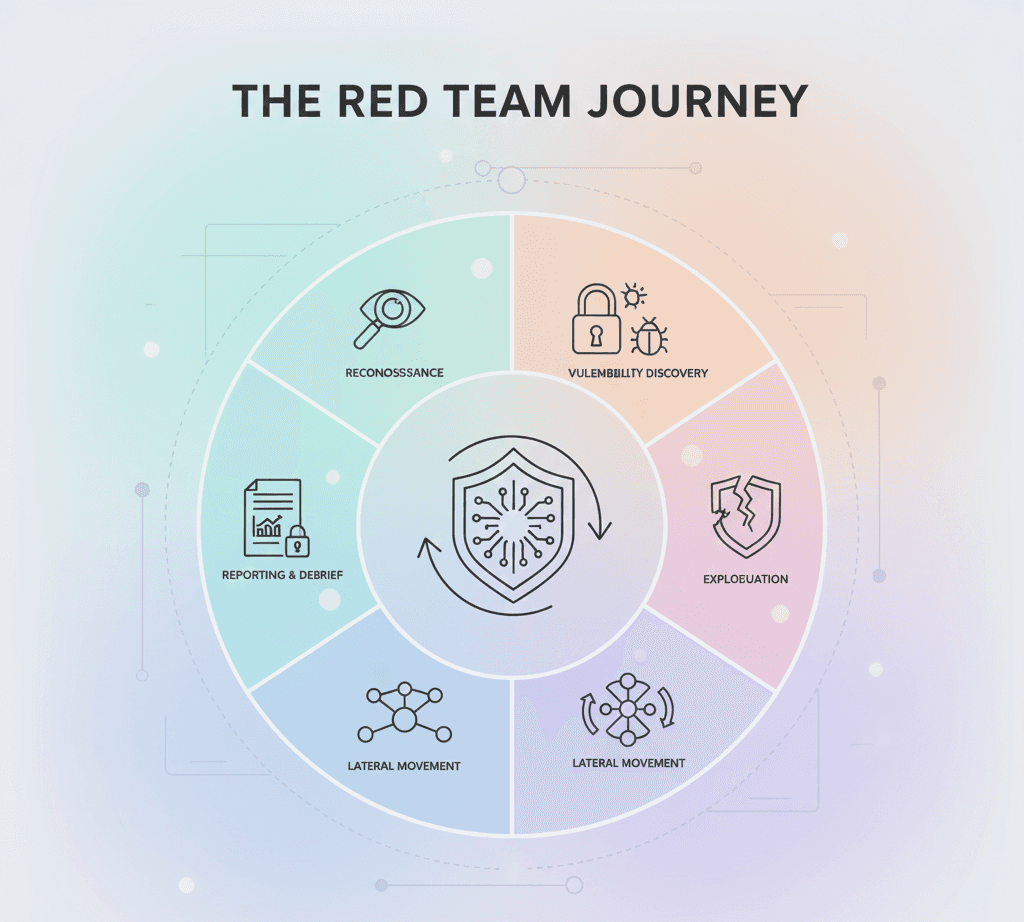

How Red Teaming Works for Generative AI

AI red teaming involves:

Crafting adversarial prompts: Carefully engineered inputs meant to trick the model into producing undesirable outputs, such as instructions for illegal activities or offensive content.

Using automated red team LLMs: These "adversary" models are trained to generate a wide variety of jailbreak prompts, making it easier to expose vulnerabilities at scale.

Identifying weak spots: Once a model responds inappropriately, those flaws are logged and categorised (e.g., security risk, ethical bias, misinformation).

Re-aligning the model: Developers create additional training data to reinforce safety guardrails, thereby improving the model's alignment and compliance.

This workflow is a core part of red team cyber security for AI — ensuring systems stay protected even under unpredictable or adversarial pressure.

Why Red Teaming Is Essential for AI Safety & Compliance

Red teaming is more than a testing tool — it's a key part of AI governance, risk management, and regulatory readiness. Here’s why it matters for enterprises:

💠 Compliance with global AI regulations: As governments introduce frameworks like the EU AI Act or U.S. AI Executive Order, red teaming supports documentation, risk assessments, and proactive mitigation — keeping you compliant.

💠 Defence against jailbreaks: Prompts can still bypass safety filters using tactics like rare-language translations or prompt obfuscation. Red penetration testing helps uncover and patch these loopholes.

💠 Continuous protection: As generative models evolve, so do threats. Continuous red team testing ensures ongoing vigilance in a fast-changing landscape.

Tools, Techniques, and Datasets Shaping Modern AI Red Teaming

Innovative tools and datasets are helping red teams stress-test AI systems more thoroughly:

AttaQ: A dataset used to prompt LLMs into generating criminal advice or deceitful content.

SocialStigmaQA: Designed to elicit discriminatory or offensive outputs from models.

Prompting 4 Debugging & Ring-A-Bell: Tools used for red teaming image-generation models, revealing visual outputs with inappropriate or harmful elements.

GradientCuff: A defensive tool that reduces the success rate of AI attacks by over 50%.

At Accorp, we work with the latest advancements in adversarial AI testing to ensure your AI systems are not only smart but safe, compliant, and secure. Our red team cyber security experts are capable of both automated and human-driven assessments.

Human Red Teams Still Matter

Despite automation, human testers play a vital role. Real people bring diverse perspectives, cultural insights, and ethical awareness — helping uncover the “unknown unknowns” that AI alone may overlook.

This diversity is crucial for surfacing subtle or emerging issues, especially as regulatory scrutiny intensifies globally.

Future of Red Teaming: A Constant, Evolving Need

AI red teaming is not a one-time task — it's a continuous lifecycle process. As models change, threats evolve, and use cases expand, red team operations must keep pace.

Governments and enterprises alike are investing in red teaming initiatives, from DEF CON hackathons to AI safety institutes. For companies adopting or building generative AI tools, red teaming will be essential to maintaining ethical standards and user trust.

Final Thoughts: Building Safer AI Starts Here

At Accorp, we help organisations navigate the complexities of generative AI risk with a clear, structured, and secure approach. Our red team testing services are designed to uncover vulnerabilities, enforce alignment, and ensure your AI deployments meet the highest standards of safety, integrity, and compliance.

Whether you're developing your own models or integrating third-party AI, robust red penetration testing practices are critical to staying ahead of the curve.